Nonspecific Amplification

The stories we tell about technology don't describe reality: they create it. The question isn't where AI is going — it's where we're pointing it.

Sam Altman's house is on fire.

Not metaphorically — someone actually set it on fire. Two days later, it was targeted in a drive-by shooting. And while that's extreme, it's also a signal. AI has a messaging problem. But it's not the messaging problem most people are talking about.

Ethical questions about training data and creative labor are real. So are concerns about LLM energy use and water consumption. And yes, the alignment folks have legitimate arguments about existential risk. But another drumbeat grows louder — one coming not from AI's critics, but from its biggest cheerleaders and the frontier labs themselves. The drumbeat says: mass unemployment is coming. Soon. The K-shaped economy is about to get a lot more K-shaped. And if you don't figure out how to ride this wave, you're heading for the permanent underclass.

They're not wrong. The technology is moving that fast. But we're not thinking critically enough about what it means to treat that outcome as inevitable. What it means for the people building this stuff to shrug and say UBI isn't their problem. What it means to promise abundance while most people are already struggling to afford groceries.

Nonspecific Amplifiers

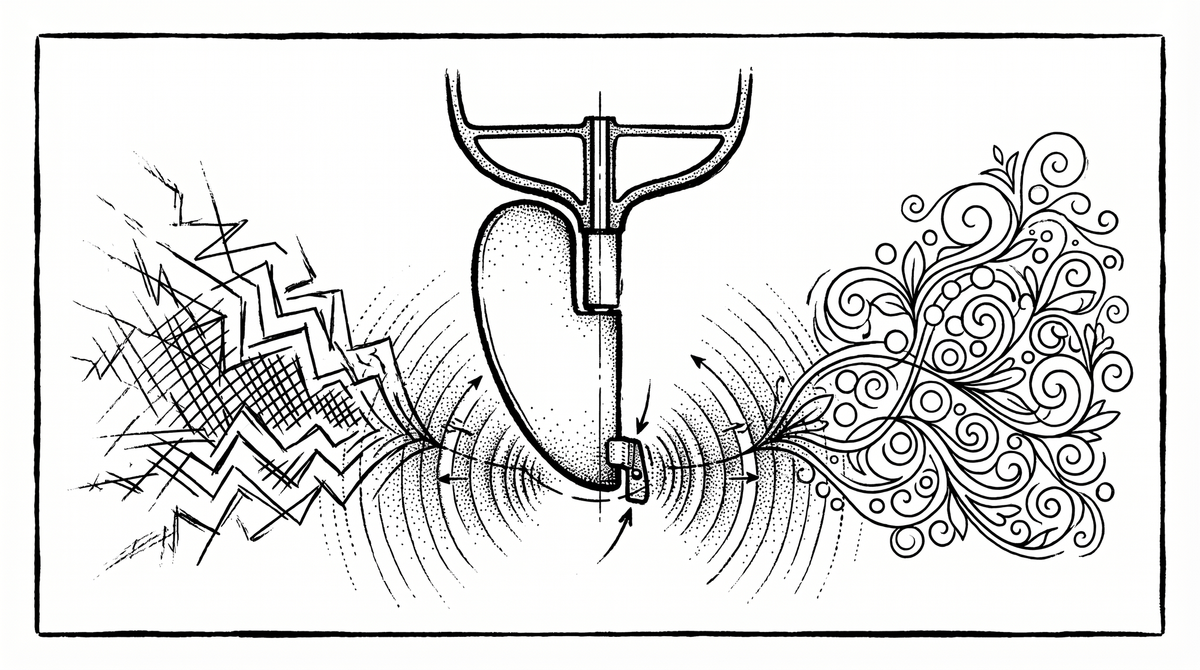

This is where Stanislav Grof comes in. Grof — the psychedelic researcher — called psychedelics "nonspecific amplifiers". Taking LSD doesn't mean you're automatically going to end up in a drum circle in Golden Gate Park wearing tie-dye & smelling of patchouli. There's nothing in the chemical substrate that confers peace and love. Some people take LSD and write poetry. Others take it and join a cult. The molecule doesn't know the difference.

AI works the same way. Nothing about its substrate necessitates a permanent underclass. But nothing about it guarantees utopia either. It amplifies whatever direction you're already facing — and accelerates you there at the speed of light. The direction is the only thing that matters. And right now, the direction is extraction.

Hyperstition Is Real

The stories we tell ourselves don't describe reality. They create it. This is hyperstition: the fiction that makes itself real. When AI advocates cheerlead for mass unemployment and social stratification, it isn't a neutral prediction. It's a choice. And it's generating exactly the world it describes.

I propose a better story for the cosmic interweaving of human and machine. One where technology functions as a deus ex machina — not to replace us, but to liberate us. Where our intention is human flourishing. Where we use these tools to bring about the forms of easeful abundance we know we're capable of.

I'm not naive about this. On balance, I'm pro-AI — extremely pro, with heavy reservations in specific domains. But my optimism comes with a fixed ethical viewpoint: our optimization function should be abundance, the free distribution of resources, and technology that works for humans without extracting from the natural world. I have every intention of being a good ancestor.

The Toolkit

Fifteen years ago, Charles Eisenstein published Sacred Economics — a book that treats capitalism not as natural law, but as cultural mythology. A story we chose to buy into. In AI parlance: capitalism is just one system prompt. We can have others.

(Yes, I'm the kind of person who references a 2011 economics book in an AI essay. We're going to get through this together.)

There's another framing for this: Game A and Game B. Game A is the exploitative, extractive, zero-sum race to the bottom that's dominated the last ten thousand years. Game B is regenerative — based on cohesion, collaboration, and the kind of society where technology serves life instead of the other way around.

Grof's frame is the one I keep coming back to. The amplifier doesn't confer worldview. Whichever direction you're facing, you'll travel there faster. That makes it our responsibility to choose the direction. To be what Buckminster Fuller called a trim tab: the tiny adjustment on a rudder that, over thousands of miles, changes the course of an entire ship.

Yes, we need societal-level changes in how we conceive and distribute AI. But it can also be as simple as each of us choosing to nudge the rudder a tiny bit.

We've Been Here Before

I know how this story can go wrong because I've watched it happen before.

At its best, crypto represented something beautiful. The possibility of divesting from the petrodollar as global reserve currency. A substrate for planetary-scale coordination outside the control of mega-bankers and hegemonic institutions. NFTs as mass patronage of the arts — artists owning their distribution without middlemen. DAOs as digital-native organizations for community-level resource distribution. All of it pointing toward a post-capitalist, non-extractive, positive-sum world.

That was one vision. The vision that won was the casino. Meme coins. Lamborghinis in Miami. The total vacuous pursuit of personal gain. Ten Thousand Adjective Animals projects and the grift that followed.

I was there. I saw where the technology could go. I watched the nonspecific amplifier speed toward extraction instead of liberation. Having lost one story — watching one beautiful possible-future get co-opted by Game A's worst instincts — I'm not prepared to let AI go the same way.

Why AI Is Harder

AI is harder than crypto in every way that matters. It's moving faster. The negative externalities are orders of magnitude larger. But so is the positive potential. The question isn't whether this technology will transform society. It's whether we'll still have public sentiment on our side when it does. Whether we're sleepwalking into a cyberpunk future when we could be building solarpunk. Whether we'll use our voices to imagine something beautiful — or just let the default optimization function run its course.

Zero-Human Companies

There's a movement around what people are calling "zero human companies" — businesses run entirely by agentic systems, no humans in the loop. The off-the-shelf version of this is predictable: automated widget optimizers designed to extract maximum value. Profit as the only optimization function.

But what if it wasn't?

What if we had teams of agents collaborating with the explicit goal of maximizing human flourishing? What if the CFO of an agentic company believed in the principles of Sacred Economics — full-cost accounting that respects ecological and social value, negative interest that prevents hoarding, distribution that prioritizes need over accumulation? What if we seeded exponential intelligences with Game B principles and let them grow?

I don't have the full blueprint. Nobody does. But the reframe is the point. The very tools being built for extraction could be redirected toward the world we actually want to see.

Trim Tabs

Fuller's trim tab works at every scale. Yes, there's a society-level story that needs rewriting. But it can also be as simple as each of us nudging the rudder a fraction of a degree. Choosing different words when we talk about AI. Building different products. Imagining different outcomes.

We are the good ancestors we are waiting for. And the direction is the only thing that matters.